Series

↗(left to work on my own thing)

The Idea

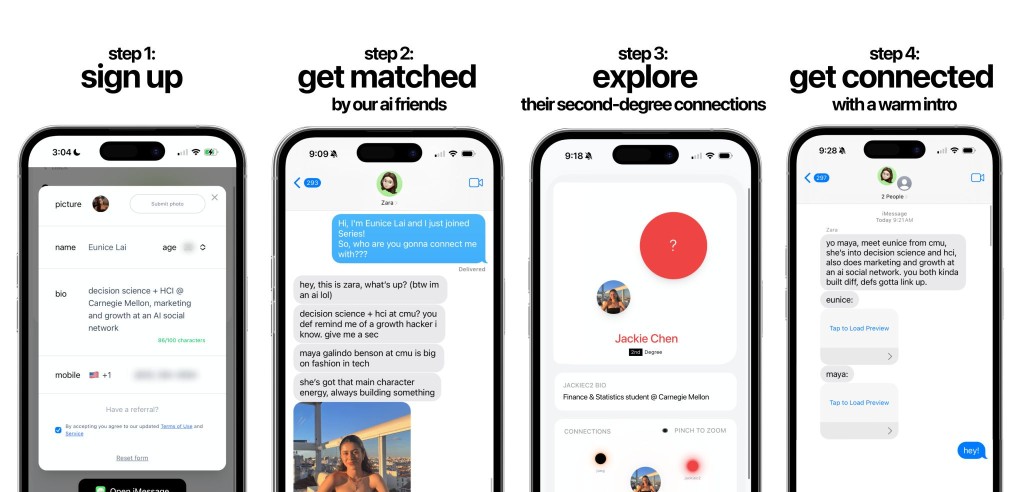

the idea was to have ai friends that you can chat and tell who do you wanna connect w, and they get the person from your 1st and 2nd degree connections, and make a gc for warm intros.

The Need

social media made everyone connected but nobody actually connected. platforms optimize for scroll time, not relationships — and networking still runs on cold DMs, awkward linkedin messages, and hoping you bump into the right person.

your friends already know the people you need to meet. there was just no way to tap into that graph without spamming everyone.

The Problem Space

when I joined Series, the core challenge wasn't just "build a matchmaking app." it was a set of deeply interconnected technical problems that all had to be solved together:

knowledge graphs + semantic matching

the first big question: how do you represent human relationships mathematically? we built knowledge graphs that mapped entities (people, interests, events, preferences) and their connections. this wasn't a standard social graph — it needed to capture latent preferences, behavioral signals, and contextual compatibility in ways that traditional approaches couldn't.

some of this is confidential — can't go into exact architecture specifics.

then came the vector space — what parameters define a person's "matchability"? how many dimensions do you need to capture the nuance of why two people should meet? we landed on high-dimensional embeddings in Pinecone, with custom re-indexing and reranking pipelines to keep semantic search fast and relevant. the trick was balancing dimensionality — too few and you lose signal, too many and retrieval gets slow at scale.

reverse-engineering iMessage

this work is confidential but was highly inspired from open-sourced repos.

this was the part i did on my own. Apple doesn't give you an API to build on top of iMessage — so i had to reverse-engineer the protocol to make our system work as a native iMessage experience. the result was that users could interact with our AI matchmaker through their existing messaging app with zero setup. it felt native because, from iMessage's perspective, it basically was.

infra + scalability

once we had the interaction layer, the next problem was: how do you make this fast at scale? when someone asks "find me someone who..." that query has to traverse a high-dimensional vector space, score candidates against a personalized compatibility model, and return results — all with sub-second latency.

- vector search at scale — we needed sub-second retrieval across a growing user base. the dimensionality of the vector space was carefully tuned — (the exact configuration is confidential) — but the tradeoff was always between expressiveness and query latency.

- Celery + Redis — the backend was built around message queues and Redis to handle the async nature of iMessage interactions. messages come in unpredictably, processing is non-trivial, and responses need to feel instant. we designed the pipeline so that heavy computation (graph traversal, vector search, LLM inference) happened asynchronously while maintaining conversational flow.

- GCP Cloud Run + Docker — containerized deployments with CI/CD through GitHub Actions, rollback pipelines, service accounts

- MongoDB — flexible schema for rapidly evolving user and relationship models

- OAuth + Twilio — auth flows and SMS webhooks for the iMessage integration layer

we made deliberate choices about event-driven vs. request-response patterns, caching strategies for repeated queries, and how to partition state between ephemeral (Redis) and persistent (database) layers. one wrong pattern and the whole matching pipeline would bottleneck. (confidential — can't share the exact infra topology.)

the agentic layer — prompts, tone, and safety

this was, honestly, the hardest part. building the AI agent wasn't just about making it smart — it was about making it safe, appropriate, and culturally fluent.

gen-z tone calibration — i read a significant number of research papers on conversational AI tone, generational communication patterns, and trust-building in AI interactions. the agent had to feel like talking to a friend, not a chatbot. getting this right meant constant iteration on prompt engineering and evaluation frameworks.

agentic framework selection & optimization — we evaluated multiple agentic frameworks and ultimately chose one that gave us the right balance of control and flexibility. prompt optimization was an ongoing process — not just for quality, but for latency and token efficiency. agents needed to make tool calls reliably — search the graph, pull profiles, create group chats — without hallucinating or breaking flow. (framework specifics are confidential.)

safety + alignment — the hardest problem

this was the thing i cared about most. the system had to handle:

- adversarial users trying to jailbreak or attack the system

- NSFW content that could never surface in responses

- minors potentially using the platform — this was non-negotiable; the AI had to be safe by default

- hallucination prevention — we couldn't let the AI fabricate information about other users or make promises the system couldn't keep

- information leakage — the AI should never reveal things it shouldn't, even under clever prompting

i built layered safety systems — input filtering, output validation, behavioral guardrails, and monitoring — to ensure that the AI stayed within bounds no matter what was thrown at it. making sure it never hallucinated connections, never leaked private info, and never let harmful content through. (the specific safety architecture is confidential, but this was the system i'm most proud of building.)

Stack

protocols & systems iMessage Protocols · Agentic Systems · Cache Optimisation · System Design

AI & ML Transformers · LLMs · Prompt Engineering · Pinecone · Vector DBs · Knowledge Graphs

backend & infra Python · FastAPI · Celery · Redis · MongoDB · GCP · Docker · CI/CD · OAuth · Twilio

frontend React · Next.js

My Designs